In the era of hybrid cloud and massive data growth, observability has become essential for both security and operational resilience. However, many organizations are facing a painful paradox: the very data needed for critical visibility is breaking the bank.

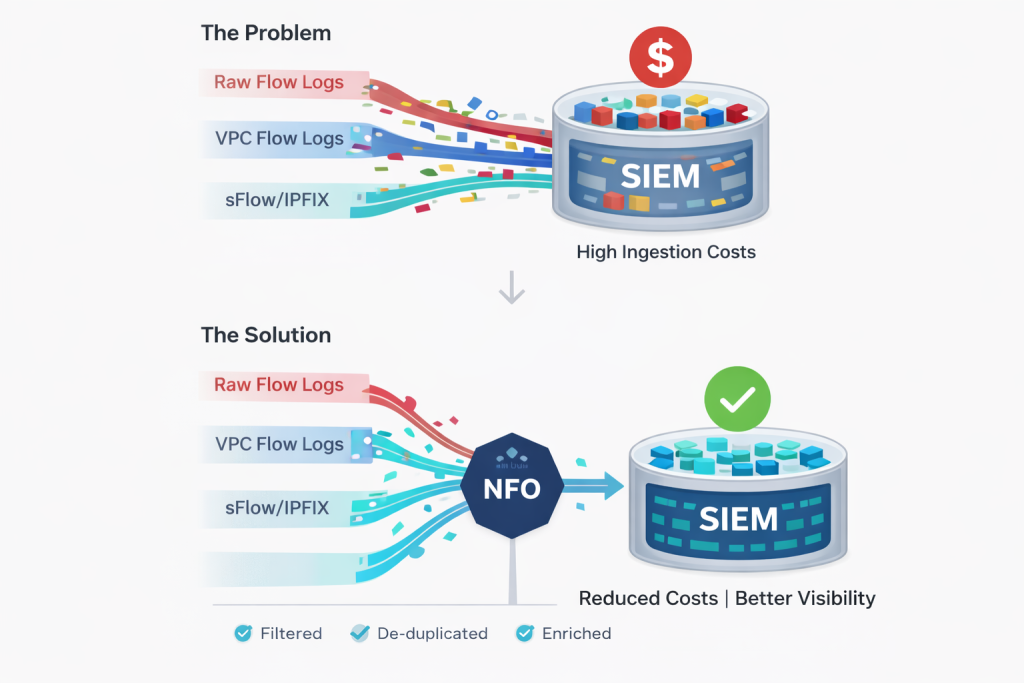

Security and network teams have traditionally sent all raw, un-sampled flow data (NetFlow, IPFIX, VPC Flow Logs) directly to their SIEM or logging platform. They do this to avoid missing any potential threat or performance issue. But this “collect everything” mentality has a massive hidden cost. Sending raw, redundant data at scale is a budget killer, leading to soaring ingestion fees, bloated storage costs, and a SIEM that’s so buried in noise that critical signals are harder than ever to find.

The path to cost-effective observability isn’t simply cutting data—it’s optimizing the observability pipeline.

The Problem: When Raw Data Overwhelms Your SIEM

Sending raw flow logs to a SIEM creates a multi-layered problem:

- Ingestion Costs: Most SIEM and logging platforms (like Splunk or Microsoft Sentinel) charge based on the volume of data ingested. Raw flow data is extremely chatty, often representing one of the largest data sources by volume in an organization.

- Redundancy and Noise: Flow logs are naturally repetitive. A single multi-second connection can generate multiple, almost identical flow records. Furthermore, multiple network devices often report on the same flow, creating multiple copies of the identical traffic record as it traverses different segments. Sending all this duplicate and low-value data is pure wasted spend.

- Search and Analysis Pain: A SIEM buried under a mountain of repetitive flow logs is slower to search. Security analysts and network engineers waste valuable time sifting through thousands of benign connections to find the one single event that matters.

The Solution: Optimizing at the Source with NFO

NetFlow Optimizer (NFO) provides the critical data-processing layer before your telemetry ever hits your SIEM. By processing and optimizing this data at the source—the edge of your network or cloud environment—NFO can dramatically reduce the volume while increasing the quality and usability of your data.

1. Intelligent Aggregation and Flow Stitching

NFO reduces data volume by moving beyond the “one-packet-one-record” model. Instead of flooding your SIEM with repetitive entries, NFO performs sophisticated data aggregation, summarizing multiple identical flow records into a single, comprehensive “golden record”. This process instantly slashes ingestion volume while maintaining critical visibility into the overall connection. Furthermore, NFO excels at flow stitching—accurately combining disparate data points from across the network into a unified conversation. This ensures your analytics platform receives a clear, high-fidelity narrative of network activity rather than a fragmented mountain of raw logs.

2. Real-Time Data Enrichment for Context

While NFO focuses on volume reduction, it simultaneously elevates the overall utility of your telemetry through a process of real-time enrichment. Instead of delivering raw, anonymous data points, NFO injects multi-dimensional context into every optimized flow record before it ever reaches your SIEM. This includes critical layers such as User Identity (correlating IP addresses to actual usernames), Security Reputation (flagging known malicious actors), Geolocation (pinpointing the origin of traffic), and Application Context (identifying the specific service in use).

3. Intelligently De-duplicating Multiple Views

When a single packet is seen by three different switches on its way through your network, it often generates three separate flow records. NFO’s intelligent de-duplication identifies these multi-point perspectives of the same flow and consolidates them into a single, definitive “golden record.” This single step alone can eliminate over 50% of the volume for many common network architectures.

By delivering a high-fidelity, identity-enriched record instead of thousands of raw packets, your SIEM receives much more valuable information, which is easier to search, analyze, and automate against.

Conclusion: Gaining Control of Your Observability Budget

You shouldn’t have to choose between a secure network and a balanced budget. Optimizing your observability pipeline with NetFlow Optimizer allows you to achieve both.

By aggregating, de-duplicating, and enriching data at the source, NFO can reduce your SIEM ingestion costs by up to 80% while simultaneously providing cleaner, more context-rich data. Your security analysts can focus on real threats, your network engineers can find root causes faster, and your budget stays under control. Your SIEM doesn’t need more data; it needs better context.

Are you ready to cut your SIEM costs while actually improving visibility?

[Contact us today] to learn how NFO can optimize your observability pipeline, or [Schedule a Demo] to see our cost-reduction capabilities in action.